Table of Contents

- 1. Introduction

- 2. Shared Memory

- 3. Message Passing

- 4. Problems in IPC

- 5. Dining Philosophers Problem

- 6. Producer-Consumer Problem

- 7. Conclusion

- 8. Sources

1. Introduction {#introduction}

In modern operating systems, programs do not run as a single block. Instead, they run as multiple processes, each doing its own task. These processes are usually isolated from each other for security and stability reasons. However, in many real-world situations, processes need to communicate and work together.

For example, when you use a web browser, there are multiple processes running at the same time. One handles the user interface, another handles rendering pages, and others handle network requests. For all of this to work properly, these processes need to exchange information.

This is where Inter-Process Communication (IPC) comes in. IPC is a mechanism that allows processes to communicate with each other and coordinate their actions.

Processes can generally be divided into two types:

Process Types

─────────────────────────────────────────────────────────

Independent processes → do not interact with others

Cooperating processes → share data, depend on each otherCooperating processes improve system performance, allow better resource usage, and make systems more modular and organized.

2. Shared Memory {#shared-memory}

Shared memory is one of the most efficient ways for processes to communicate. In this method, two or more processes are given access to a common area in memory.

Normally, each process has its own private memory space, and it cannot access another process’s memory. This is controlled by the operating system. However, when shared memory is used, the OS creates a region of memory that multiple processes can access.

Normal (isolated):

Process A [ private memory ]

Process B [ private memory ]

Process C [ private memory ]

With shared memory:

Process A [ private ] ──┐

Process B [ private ] ──┼──→ [ SHARED REGION ]

Process C [ private ] ──┘Once this shared memory is created, processes can read and write data directly without involving the operating system every time. This makes shared memory very fast.

However, this also creates a problem. Since multiple processes can access the same memory at the same time, there must be a way to control access. Otherwise, one process might overwrite data while another process is using it.

This is why synchronization mechanisms like mutexes and semaphores are used together with shared memory.

Shared memory: pros and cons

──────────────────────────────────────────────

+ Very fast (no OS involvement per operation)

+ Efficient for large data exchange

- Requires synchronization (mutex / semaphore)

- Risk of race conditions if unprotected3. Message Passing {#message-passing}

Another way processes communicate is through message passing. Instead of sharing memory, processes send messages to each other.

In this model, the operating system acts as a middle layer. Processes do not directly access each other’s memory. Instead, they use system calls:

send(message)

receive(message)The OS manages communication using structures such as queues or mailboxes. One process sends a message, and another process receives it.

Message passing flow:

Process A OS Process B

───────── ────── ─────────

send(msg) ──────────→ [queue] ──────────→ receive(msg)Message passing is safer than shared memory because processes remain isolated. It also works well in distributed systems, where processes might be running on different machines.

Common implementations:

Method Use case

────────────────────────────────────────────────────

Pipes → communication between related processes

Sockets → network communication

Remote Procedure Calls → calling functions across machines (RPC)Although message passing is safer, it is usually slower than shared memory because the operating system is involved in every communication step.

Shared memory vs Message passing

────────────────────────────────────────────────

Shared Memory Message Passing

Speed Fast Slower

OS involvement Setup only Every operation

Process isolation No Yes

Distributed use No Yes

Sync required Yes No (OS handles it)4. Problems in IPC {#problems}

Even though IPC is necessary, it introduces several challenges. When multiple processes access shared resources, problems can occur if proper synchronization is not used.

Common IPC problems:

Race condition → multiple processes access data at the same time

result becomes unpredictable

Deadlock → processes wait on each other

none can continue → system is stuck

Starvation → a process never gets access to a resource

it keeps waiting indefinitelyThese problems show why coordination between processes is very important.

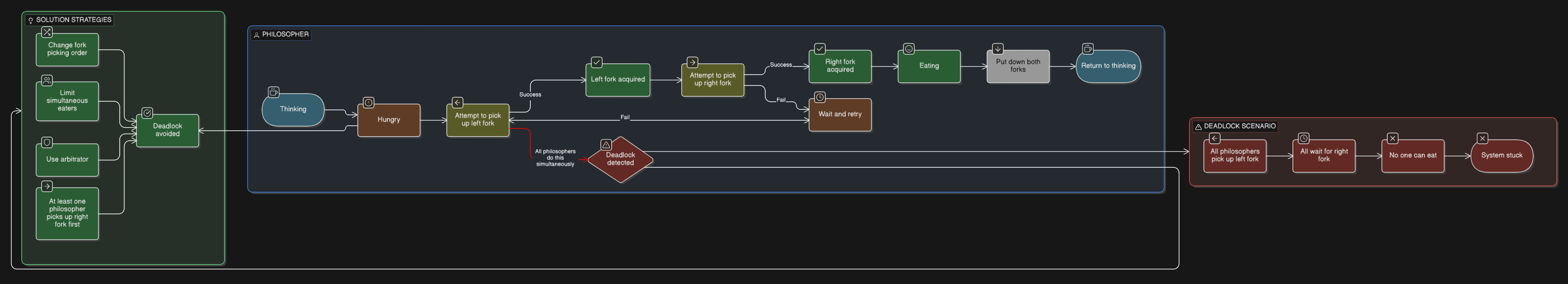

5. Dining Philosophers Problem {#dining-philosophers}

One of the most famous problems related to IPC is the Dining Philosophers Problem.

In this problem, five philosophers sit around a table. Each philosopher needs two forks to eat, but there are only five forks, one between each pair of philosophers.

If each philosopher picks up the fork on their left at the same time, none of them can pick up a second fork. No one can eat. The system is stuck, this is a deadlock.

Common solutions:

Solution How it works

───────────────────────────────────────────────────────

Semaphores → control access to each fork

Limit concurrent eaters → only 4 philosophers attempt at once

Ordered fork pickup → always pick lower-numbered fork firstThese solutions ensure that the system continues to run without getting stuck.

6. Producer-Consumer Problem {#producer-consumer}

Another important IPC problem is the Producer-Consumer Problem.

In this scenario:

Producers → generate data → place it in a shared buffer

Consumers → take data ← remove it from the shared bufferThe problem occurs at the boundary conditions:

Buffer FULL → producer tries to add more → overflow / overwrite

Buffer EMPTY → consumer tries to read → nothing to consumeVisualized:

Producer Buffer Consumer

──────── ──────── ────────

generate() ──→ [ _ ][ _ ][ D ][ D ][ D ] ──→ consume()

State: has space → producer can write

has data → consumer can readTo solve this, synchronization is required:

Mechanism Role

────────────────────────────────────────────────────────

mutex ensures only one process accesses the buffer at a time

semaphore tracks available space (for producer)

semaphore tracks available data (for consumer)This ensures that producers and consumers operate correctly without interfering with each other.

7. Conclusion {#conclusion}

Inter-Process Communication is a key concept in operating systems. Even though processes are isolated for safety, they often need to communicate to complete tasks efficiently.

Two main IPC approaches:

Shared Memory → fast, direct, needs synchronization

Message Passing → safe, structured, OS-managedHowever, IPC also introduces challenges such as race conditions and deadlocks. Problems like the Dining Philosophers and Producer-Consumer highlight the importance of proper coordination between processes.

Understanding IPC is essential for anyone working in computing fields such as software development, cybersecurity, or systems administration, because it explains how processes interact inside an operating system.

8. Sources {#sources}

- Abraham Silberschatz, Peter B. Galvin, Greg Gagne: Operating System Concepts

- Andrew S. Tanenbaum: Modern Operating Systems

- GeeksforGeeks: Inter Process Communication (IPC)

- TutorialsPoint: Operating System IPC

- MIT OpenCourseWare: Operating Systems lectures